How Google Works

If Google’s algorithm is proprietary, how do SEO professionals know what factors can help a business optimize for top rankings in the search results? There are a couple different ways.

We can:

- Look at Google’s filed patents, including the initial patent that described how PageRank works.

- Follow Google’s own SEO recommendations from their webmaster guidelines, their blog, and videos Google releases about SEO.

- Review the results of case studies and experiments conducted by some of the companies that offer SEO tools and tips. (See video and post below).

- Refer to experience with clients we’ve seen achieve top rankings. We see local Wisconsin companies jumping to page one in the results by step 4 of The Phases of SEO for a Local Business.

Here’s a talk I recently gave at PechaKucha Madison on this subject. It’s a neat format, 20 slides in 20 seconds apiece, that forces you to be concise.

Google’s prevalence

People search Google for find information over 3.5 billion times per day. With just a few words, Google brings you the most relevant results in under a second from 30 trillion individual web pages.

Before Google, search engines like AltaVista, Ask Jeeves and Lycos crawled the web and indexed the content on web pages. The trouble with that method was that it didn’t sort by relevance very well. If someone mentioned Microsoft 50 times on one page and Microsoft’s website only mentioned itself 10, that minor page might appear above Microsoft’s website when someone searched for it. Hence why AltaVista is not a verb today.

Larry Page and PageRank

What Google co-founder Larry Page figured out while a graduate student at Stanford was that the web could be similar to academic research papers. Scholars cite other research in their own, and the papers with the most citations are often the most important. Likewise, all else equal, webpages with more links to them are more likely to be authoritative than webpages on the same subject with fewer or no links to them.

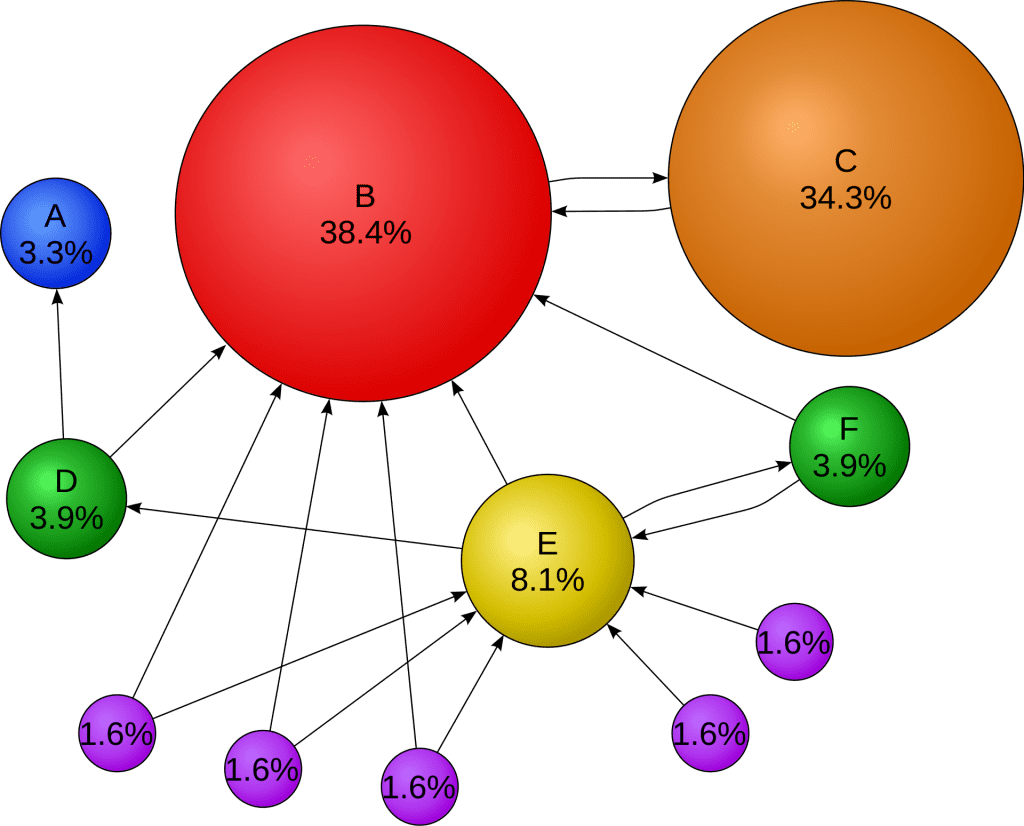

Nor are all links created equal. A minor blog post on a subject might only have the SEO link juice of one of the purple circles below, while a New York Times article on the same subject would be the red circle below with many different links pointing to it. What’s interesting is that the orange circle is so large, that might be an academic study linked to from the New York Times article; even though it only has one link pointing to it, that powerful endorsement from the Times signals high authority, much higher than the minor blog post with no links from major sources.

Nor are all links created equal. A minor blog post on a subject might only have the SEO link juice of one of the purple circles above, while a New York Times article on the same subject would be the red circle below with many different links pointing to it. What’s interesting is that the orange circle is so large. That one might be an academic study linked to from the New York Times article; even though it only has one link pointing to it, that powerful endorsement from the Times signals high authority, much higher than the minor blog post (purple circle) with no links from major sources.

Click Through Rates & The Value of SEO

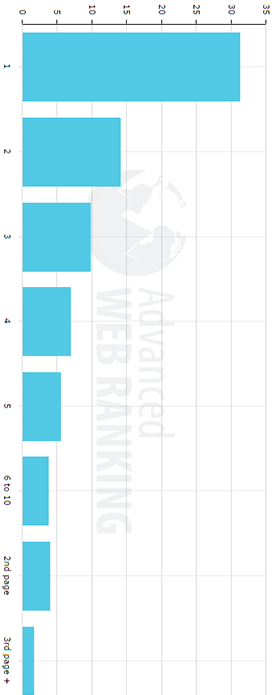

So unlike when we used the old search engines, where it was normal to have to scroll down page 1 or even click to page 3 to see the result we wanted, most people now click on the very top results. Hence, it really matters to be on page 1 if under 5% of searches render clicks on page 2. In fact, each spot you climb on page 1 can double the clicks to your website!

Here we see the x axis as percent of clicks and the y axis as position in the search results. (See this study to learn more.)

The Emergence of Black Hat SEO

So if it’s worth money to be at the top of Google’s results and it takes links to be there, guess what happened? That’s right, people started buying and selling links in violation of Google’s Webmaster Guidelines.

Here are some examples of black hat SEO tactics we don’t recommend:

- Using invisible page text search engine crawlers see but human visitors don’t

- Buying links from other websites

- Acquiring expired domains with good PageRank (but on unrelated topics) and selling links or redirecting them to your own site.

- Building up the PageRank of Private Blog Networks (PBNs) loosely covering the same subject or industry, then directing those links to target sites.

- Using different hosting companies and IP addresses to make it look like these other websites are unrelated to you.

Google’s Penguin Update

It didn’t take Google long to find these link schemes and punish them. Remember, Google is the dominant search engine because its results are better than its competitors (Bing now copies Google in weighing links as a major factor). That’s why they rolled out their Penguin update starting in 2012. This update and the iterations that followed punished sites benefiting from Black Hat link schemes by removing them from Google’s results entirely. Imagine making money from website visitors because you’re king of the result hill one day then going broke because you’re not on page 1, 2, 3 or anywhere else in the search results anymore.

Sites with Penguin penalties then had to disavow their toxic links and beg Google for approval to be entered back into the results, which could often be many months until the next iteration of Penguin rolled out.

Negative SEO

Because these links were so damaging, Google inadvertently created a new purpose for the link farms and Private Blog Networks: weapons.

Though it’s hotly debated how many sites were really affected by negative SEO, some did find toxic links directed to them, thus sparking a Google penalty when the webmasters weren’t doing anything sketchy themselves. For this reason, very competitive national and international searches sometimes required defensive vigilance to make sure no unwanted incoming links could trigger removal from search results.

Penguin’s latest update now runs continuously rather than in sudden roll outs, and seems to only punish particular pages, not entire domains. For this last reason, these negative SEO weapons are not as powerful as they once were.

SEO Tools Help Point the Way

A number of paid SEO tools have cropped up to help professional SEOs see search results way similar to how Google and Bing see them, so that they can help their clients compete for page 1. Tools like Moz, SEMRush, Ahrefs, and Majestic can estimate SEO competitiveness, evaluate on-page factors, spy on competitor keywords and track SEO ranking progress over time.

Of particular importance for our purposes here, they mimic Google and Bing by crawling the web and mapping the interconnections created by links.

Ahrefs 2 Millions Keyword Results Analysis

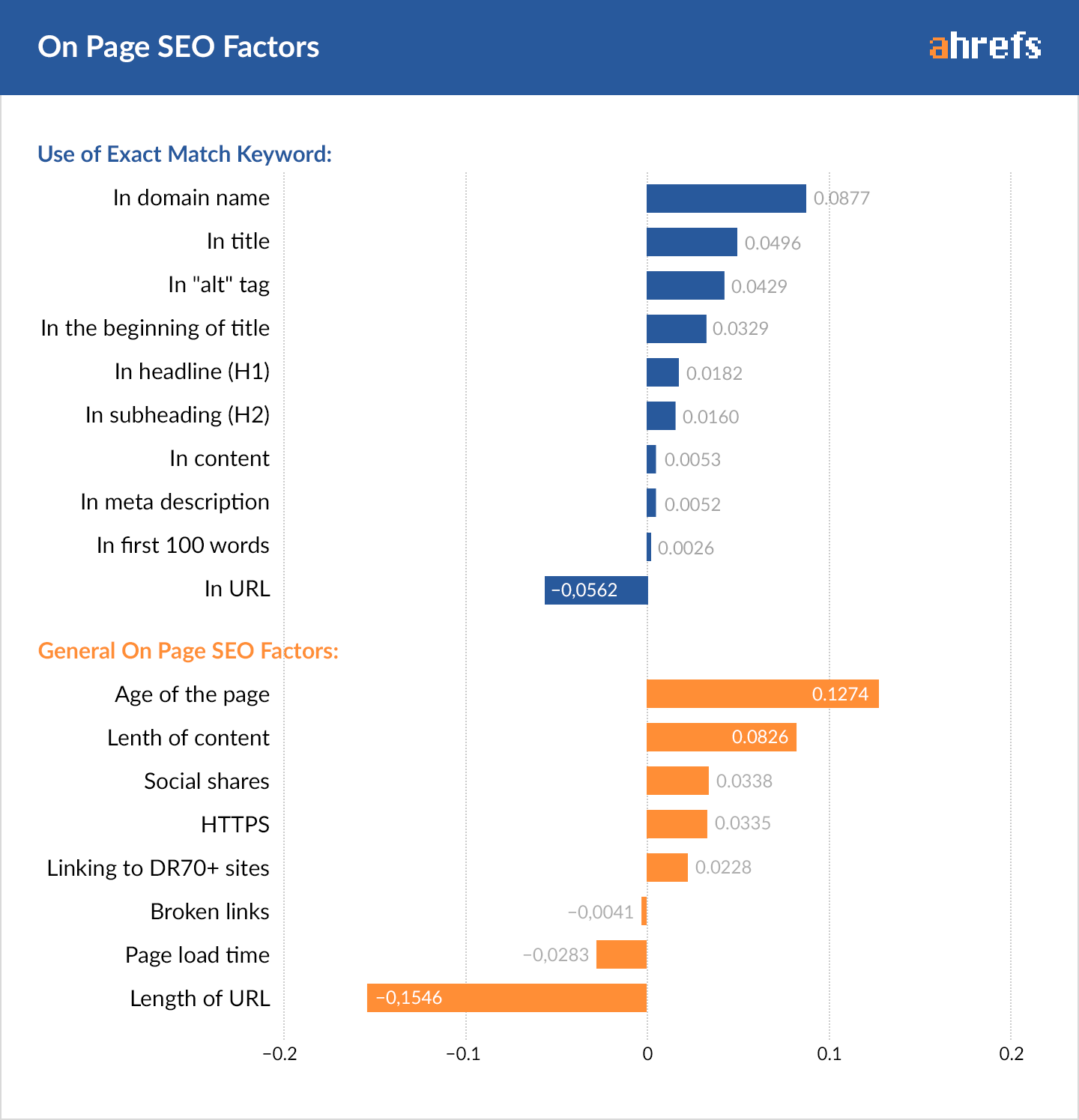

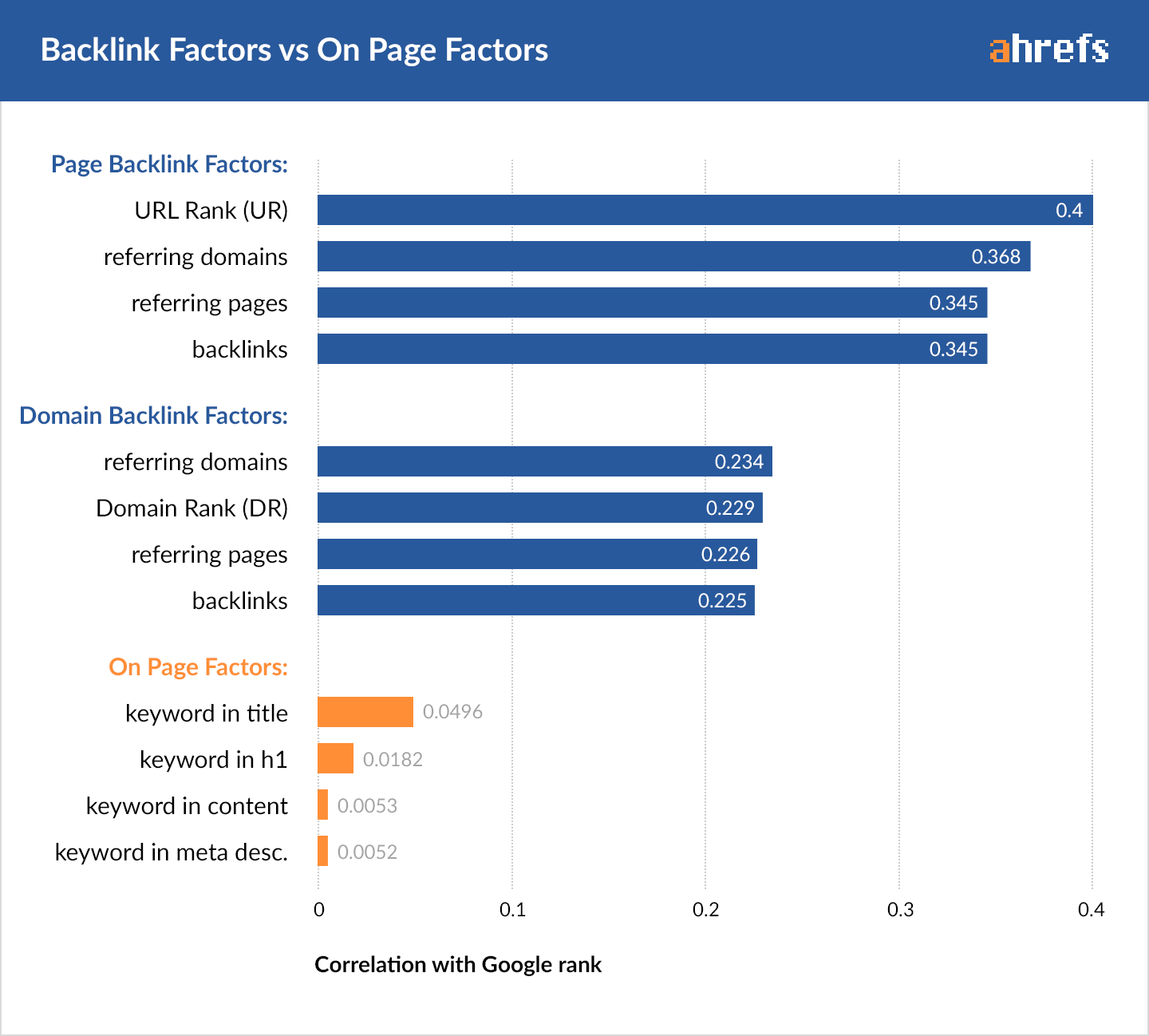

Ahrefs has one of the biggest, if not the biggest, index of crawled pages and webpage links. That data is a treasure trove of information they mined to evaluate on-page SEO factors like page and header titles versus off-page factors like total number of links pointing to the page.

Here are the onsite factors they found correlated with higher results when they analyzed 2,000,000 keywords. (Note how they’re looking at exact matches between the query and webpage, while Google knows synonyms.)

While they found that using commonly searched exact match phrases discovered through keyword research correlated with higher rankings, off-page factors won the day big time. The number of linking domains, pages and total links dwarfed on-page factors like exact keyword in page title.

Check out the full Ahrefs study here.

Brian Dean from Backlinko’s 1 Millions Results Study

Brian Dean teamed up with some of the SEO companies mentioned above and ran another study of 1 million results. He likewise found that the number of quality of inbound links differentiated the #1 results versus the lower ones.

He also found some other factors that are promising because we have more control over them:

Word Count (higher is better, so get writing!)

Secure domain (https vs http)

URL length (shorter is better, so don’t make your blog posts in a long format like this: /blog/2/19/17/what-we-know-about-google-algorithm)

Always include at least 1 image on every page or blog post

Exact match keyword in title did show some correlation, just like in the Ahrefs study.

Page speed: 2 seconds or less of load time is generally considered ideal anyway, and here that’s confirmed.

You don’t want visitors leaving your website without taking actions or viewing more than one page. So make sure each landing page is engaging and encourages visitors to read on to other pages.

You can read Brian’s full study here and the methodology here.

Rand Fishkin from Moz and the Click Through Experiment

It makes sense that Google improves its results by taking user behavior into account. Rand Fishkin from Moz tested this theory by asking his Twitter followers to search a term and click his result. Here’s what happened in a matter of hours.

Whether a result like this stays number 1 is another story, but the experiment likely suggests Google is using click through rate as a ranking factor, so make sure your page titles and meta descriptions invite visitors to click your result!

You can read Rand’s original post here and Larry Kim’s updated study here in which he found “The more your pages beat the expected organic CTR for a given position, the more likely you are to appear in prominent organic positions.”

Here’s the skeptic’s case against CTR as a ranking factor.

Conclusion

- Create engaging, long-form content that answers prospective buyers questions but doesn’t hard-sell them on your solutions.

- Don’t skip writing alluring Page Titles and Descriptions, because that’s what people see first in the search engines before they decide to come to your site.

- Plan and practice constant outreach to build links to your website and your top web pages from other websites.

Subscribe to stay up to date with what's new in digital marketing and SEO!